Heinz Von Foerster, the renowned Austrian-American physicist and cybernetics scholar, declared that “information can be considered as order wrenched from disorder.1” Ever-increasing amounts of digital data and new computational tools promise that technological developments such as artificial intelligence (AI) will bring order, clarity, and new solutions in multiple areas—from transportation to criminal justice.

Solutions are clearly needed in healthcare, particularly in the U.S., where high expenditures have not led to corresponding improvements in health outcomes.

Automated AI has been used in medicine since the development of computer-assisted clinical decision support tools in the 1970s, but recent advances in machine learning have produced stunning successes, such as the ability of computer programs to outperform radiologists in cancer diagnosis.2

There is growing excitement for ubiquitous healthcare, where tiny wireless sensors are able to constantly monitor, collect and transmit health data.3 And while IBM’s Watson Health has faced some challenges with implementation, there is continued optimism and investment that combining big data with advanced techniques such as machine learning will lead to improvements in cancer diagnosis and treatment.

As excitement for AI in medicine abounds, there is, at the same time, a bleak picture of health disparities in the U.S., particularly in cancer.

Racial minorities continue to have disproportionately higher incidence and mortality rates for multiple cancers, including breast, kidney, and prostate cancer.4

How can we bring together the excitement for the possibilities of AI in medicine with the sobering reality of stubborn health disparities that remain despite technological advances?

This leads to the question: As big data comes to cancer care, how can we ensure that it is addressing issues of equity, and that these new technologies will not further entrench disparities in cancer?

This is an important question to ask now, because though there is growing work on the need to understand the limits of AI in medicine—as well as its ethical implications—there is very little explicit attention on how AI can impact health disparities.

We believe this is an important addition to the commentary on AI and health, as it incorporates both the technical and ethical challenges and extends a social justice aspect to include AI’s implications for health equity. We focus on issues of health equity in the U.S., but we hope the ideas presented here can catalyze thinking about health disparities more globally.

Today, AI looms large in both the scientific and public imaginary, and thanks to services like Netflix, many more people understand that AI is not scary science fiction, but it involves algorithms (or recipes in the most basic sense) that use data to carry out any number of actions, such as recommending and predicting entertainment choices.

In medicine, AI experienced its heyday in the 1990s with the development of automated expert systems that provided clinical advice, then a “winter” with limited development, to the latest resurgence in the last eight years with rapid advancements in techniques such as machine learning, which can be deployed on large datasets to categorize data as well as make predictions.5

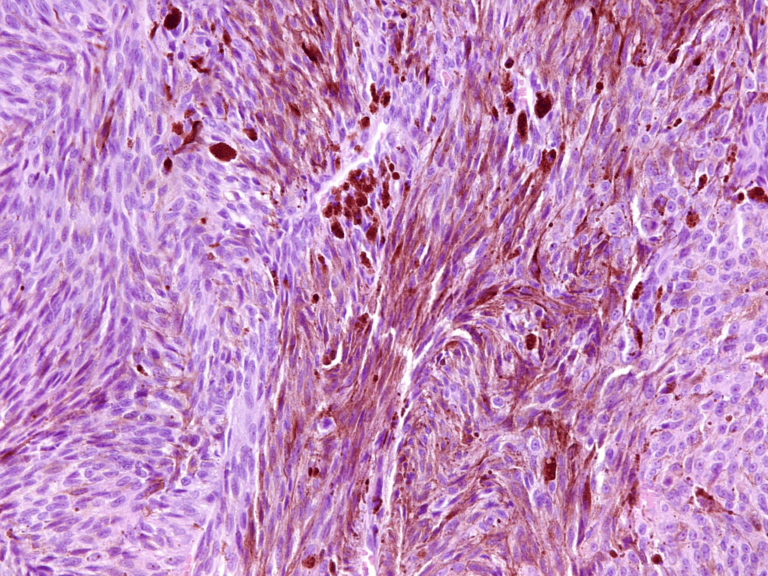

Machine learning has been used to identify cancers in medical images, changes in drug treatment protocols, and predict a range of clinical outcomes. These technologies can capitalize on the availability of digital health data such as images; electronic medical records; prescription records; medical literature; and health insurance data by analyzing the information faster than humans can, and by potentially bringing these sources of data together to provide material such as previously unknown predictors of disease or adverse drug reactions.6

However, as others have pointed out, AI does have limitations. On the technical side, it is important to understand that AI is powered by data.

As we know from “GIGO” (garbage in, garbage out), its applications are only as good as the data that is used to “train” the AI system.7 Incomplete and biased data will lead to incomplete and biased results.

For example, some have argued that IBM’s Watson Health system is prejudiced because it relies on data from a population of U.S.-based doctors at only one institution, and therefore reflects particular preferences rather than only expert information.8

AI models may also be accurate in predicting events, while simultaneously having inflated false negative rates. In addition, even if AI produces accurate predictions, these may seem to be erroneous, such as the example of an AI that predicted that patients with asthma had a lower risk of death from pneumonia than those without asthma.9

These technical limitations and possibility of “algorithmic bias” means that it is important to have “humans in the loop” in AI systems, even as they become more and more advanced and accurate. Humans are needed to check the quality of the data as well as interpret and contextualize the results.

Because of the nature of clinical care, AIs in health must have some level of transparency, and not be impenetrable “black boxes” that clinicians cannot access, understand, and explain to their patients.10

On the ethics side, we need to consider that AI in medicine has the potential to shift clinical practice, especially in those fields such as radiology and pathology that focus on categorization.

Legal scholars have also pointed out that AI has the potential to change the doctor-patient relationship, as well as the regulations around malpractice if AI systems become a major factor in making predictions and diagnoses.11

There may have to be a reconceptualization of the domains of clinician responsibility, as well as an assessment of how AIs can impact clinicians’ practices of implementing workarounds to compensate for the limitations of technological systems.12

Despite these important issues, they do not address how the increasing development of AI in medicine can affect the most vulnerable, particularly those already suffering disproportionate disease burdens.

The “GIGO” problem, for example, is troubling for everyone, but particularly concerning for some groups. It has long-been known that clinical trials data do not reflect the diversity of the U.S. population, and thus using these data in AI systems can exacerbate existing biases.

Also, the increasing use of electronic health records in AI systems favors groups who have robust health data profiles, rather than those that have limited healthcare access, discontinuous care, and more spotty and incomplete records.13

Though there is growing work on the need to understand the limits of AI in medicine—as well as its ethical implications—there is very little explicit attention on how AI can impact health disparities.

We must also pay attention to the embedded biases in health data, especially when it includes subjective assessments such as clinician’s notes. There is ample evidence that bias against demographic groups makes its way into doctor’s notes, and we must be aware of the possibility that these biases could arise due to developments such as natural language processing.

Having humans in the loop may not completely resolve this problem, particularly if the AI system’s results reinforce humans’ existing biases, such as some demographic groups are less compliant with treatment than other groups.

In addition to considering clinician bias, we must also consider that the lack of diversity in the field of AI developers and engineers impacts the systems they design. Apple designed its Health app to be a comprehensive health tracking tool, but did not include menstrual tracking until 2015, which may not be surprising considering the engineering team is predominately male.14

This is a cautionary example, but illustrates how a lack of diversity in technology development teams can lead to not recognizing the needs of specific groups. We must address the potential impacts of algorithmic bias in medicine, as well as pay special attention to how these biases may compound the exclusion and disadvantage already marginalized groups.

In addition to algorithmic bias, the ethical implications of AI in medicine such as possible changes in clinicians’ liability and obligations to explain AI systems may again have higher stakes for those who have limited access to high quality clinical care, limited health literacy, earned mistrust of medical providers, and those individuals who may be exposed to interpersonal and institutional racism and other discrimination in their health care encounters.

In this context, the dangers of ethical implications resulting in negative consequences increase the potential for reinforcing or exacerbating disparate health outcomes.

It is important to plainly state the specific challenges that AI in medicine poses to vulnerable groups, and the potential to dampen the goal of achieving health equity. Recognizing that AI in medicine can have unjust impacts across demographic groups is an important step. The following three principles comprise a framework for thinking about both the exciting possibilities for AI in medicine, as well as the potential impacts on health disparities and health equity:

Prioritize health equity in AI in medicine: Efforts should explicitly address how the development of the technology can impact health disparities. Efforts such as Google’s partnerships with major U.S. academic medical centers in developing AI health applications are promising, but do not have an explicit equity lens. Health equity should be at the forefront of AI in medicinal projects.

Address algorithmic bias for health equity: AI tools are only as powerful as the data that feeds them, so biased data will yield flawed tools (GIGO). Data must include minority and marginalized populations.

Even if datasets include adequate demographic diversity, historical biases may remain in the data. For example, a database used for AI could have an adequate amount of data from black participants, but because of historical inequities, these participants may disproportionately have cancer diagnosed at later stages.

Without attention given to the historical conditions that explain this difference, analysis of this data could lead to inaccurate conclusions about the characteristics of cancer in black populations. Stakeholders must be involved in helping to understand how big data tools can be used to empower communities of color, as well as how these same tools can perpetuate historical discrimination.

There must be a more concerted effort to increase the diversity of AI engineers so the tools that are developed do not just reflect the background, interests, and needs of select groups. Biases can make their way into AI health technologies in invisible ways that can have serious consequences for health outcomes, particularly for marginalized groups. The conversation on big data and bias in health is just beginning, and there is an opportunity to intervene before these tools are disseminated on a wide scale.

Collect non-biological data, too: With big data analysis, we have the opportunity to capture genetic, as well as non-biological, data (such as environmental records) to help us understand how multiple factors cause or prevent cancer and other diseases. Predictive analytics and AI can provide important evidence supporting the view of cancer as a disease borne from the interaction between genes and environment.

Though big data and AI pose exciting possibilities, it will likely not be the “magic bullet.” We must seriously consider not only the impacts of using big data and AI technologies but whether some of these interventions should be developed at all. It may be more effective to invest in low-tech interventions that may not be as slick and attractive as these new technologies, but are just as effective.

This editorial is our call to prioritize health equity in AI in medicine. If we are not intentional about foregrounding equity in AI in medicine (and specifically in the fight against cancer), it is likely that disparities will persist, with advantaged groups receiving the benefits and less-advantaged groups disproportionately absorbing the negative impacts.

We must keep our sights on the staggering health disparities in the U.S., as well as global health disparities between the Global North and Global South countries.

References

MGleick, J. The Information: A History, A Theory, A Flood. (Vintage, 2012).

Esteva, A. et al. Dermatologist-level classification of skin cancer with deep neural networks. Nature 542, 115–118 (2017).

Kim, S., Yeom, S., Kwon, O.-J., Shin, D. & Shin, D. Ubiquitous Healthcare System for Analysis of Chronic Patients’ Biological and Lifelog Data. IEEE Access 6, 8909–8915 (2018).

DeSantis, C. E. et al. Cancer statistics for African Americans, 2016: Progress and opportunities in reducing racial disparities. CA: a cancer journal for clinicians 66, 290–308 (2016).

Peek, N., Combi, C., Marin, R. & Bellazzi, R. Thirty years of artificial intelligence in medicine (AIME) conferences: A review of research themes. Artificial intelligence in medicine 65, 61–73 (2015).

Hamet, P. & Tremblay, J. Artificial intelligence in medicine. Metabolism 69, S36–S40 (2017).

Van Poucke, S., Thomeer, M. & Hadzic, A. 2015, big data in healthcare: for whom the bell tolls? Critical Care 19, 171 (2015).

IBM pitched Watson as a revolution in cancer care. It’s nowhere close. STAT (2017). Available at: https://www.statnews.com/2017/09/05/watson-ibm-cancer/. (Accessed: 20 September 2017)

Cabitza, F., Rasoini, R. & Gensini, G. F. Unintended Consequences of Machine Learning in Medicine. JAMA (2017). doi:10.1001/jama.2017.7797

.Price, W. N. Black-Box Medicine. (Social Science Research Network, 2014).

Froomkin, A. M., Kerr, I. R. & Pineau, J. When AIs Outperform Doctors: The Dangers of a Tort-Induced Over-Reliance on Machine Learning and What (Not) to Do About it. (Social Science Research Network, 2018).

Char, D. S., Shah, N. H. & Magnus, D. Implementing Machine Learning in Health Care — Addressing Ethical Challenges. New England Journal of Medicine 378, 981–983 (2018).

Ferryman, K. & Pitcan, M. Fairness in Precision Medicine. (Data and Society Research Institute, 2018).

Epstein, D. A. et al. Examining menstrual tracking to inform the design of personal informatics tools. in Proceedings of the 2017 CHI Conference on Human Factors in Computing Systems 6876–6888 (ACM, 2017).