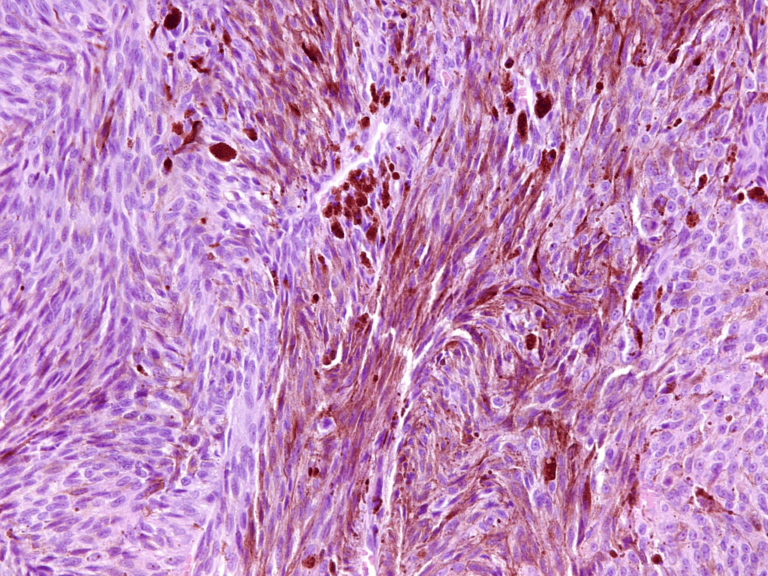

On Jan. 9, 2015, The Cancer Letter reported that Duke University received information in early 2008 that called into question the validity of the methodology and results published by the Anil Potti research group. Potti, along with his mentor and co-author Joseph Nevins, had galvanized the world of cancer research in 2006 and 2007 with their reports of successful gene expression tests for directing cancer therapy, the “holy grail” of cancer research. The 2008 information came in the form of a letter from a third-year medical student, Brad Perez, who was working in Potti’s lab. The letter, which does not seem to have been given any credence at the time, described with precision the problems that eventually resulted in the termination of clinical trials and the subsequent retractions, beginning in 2011, of at least ten (and counting) papers from major scientific journals.

We know that his letter was read by Potti, Nevins, and various high-level administrators at Duke (1).

We don’t know what those Duke administrators were thinking when they read the cogent and careful summary of “Research Concerns” that Perez had developed about the validity of the high-profile research in which he was participating, and with which he had made himself deeply familiar. Perez made it clear that his reservations were so serious that he was knowingly jeopardizing his career by taking the extraordinary steps of challenging his boss, leaving the Potti laboratory early, and removing his name from four papers destined for prestigious journals. Indeed, in his measured words, he chose to repeat an entire year of his medical education to replace his time in the Potti lab with “a more meaningful” research experience.

We do know that the concerns expressed by Perez, which only very recently became public knowledge, were not the only ones known to the Duke administration in 2008. By the time Perez raised his concerns, the MD Anderson biostatisticians Keith Baggerly and Kevin Coombes had been trying and failing to replicate the research methods. We know this led them to seek information about, and then question the methods and data in the Potti/Nevins papers from shortly after the first reports of successful genomic predictors were published.

We do know that Duke referred Perez to Potti’s boss, Dr. Nevins, to discuss his concerns. We know that, at the time, Nevins was deeply invested in the success of Potti’s research through co-authorship, co-inventorship, and his status as co-founder of the company built around it.

We know that, after meeting with Nevins, Perez acceded to what he understood to be advice not to share his concerns with his funding source, the Howard Hughes Medical Institute, and that he did so because he trusted Dr. Nevins to review the research in light of his concerns. We know that no one at Duke looked at the data or the methods Perez questioned.

We know that Nevins, who promised to and then did not examine the data, testified that, in his eventual 2010 review, it took him less than an hour to find “abundantly clear” manipulations in the data. It appears that Nevins only looked at the data after it was revealed that Potti was not the Rhodes Scholar he had claimed to be on his CV and in federal grant applications.

We know that the same research was the basis for clinical trials began in 2007 and eventually enrolled more than 100 patients, all of whom were seriously ill with advanced cancer. We know the informed consent documents signed by the patients described the trial in this way:

“The purpose of this study is to evaluate a new tool, a genomic predictor…In initial studies, the genomic predictor seemed to determine which drug would be effective in a given patient with an accuracy of approximately 80%.”

We know that the results did not have an accuracy of 80 percent, because the research was error-ridden and manipulated.

We know that clinical trials were, in the words of a Duke administrator, were based on a theory that was “a dud.” Given what is known about the research, the trials should never have been run.

We know that this state of affairs persisted from early 2008 to late 2010, when the clinical trials were finally terminated. Achieving that outcome took extraordinary persistence by Drs. Baggerly and Coombes, concerns expressed by the National Cancer Institute, an unprecedented letter signed by more than 30 prominent statisticians expert in this area, the indefatigable coverage of The Cancer Letter, the revelation of a fudged CV, and unrelenting publicity.

We know that when the Potti lab’s research was later the subject of multiple investigations, Duke administrators didn’t reveal the Perez letter. They didn’t share it when a clinical trial based on the research was suspended in 2008 while external statisticians reviewed the methodology. They didn’t report it to the National Cancer Institute. They didn’t provide it to the Institute of Medicine Translational Omics review committee. They testified to investigators that Duke had not had any whistleblower reports (p. 257), and that “in no instance did [any co-authors] make any inquiries or call for retractions until contacted by Duke.” (p. 251)

Of course, by the time they were asking, Perez had withdrawn his name and was no longer a co-author.

Is this really how it is supposed to work? Should detecting and stopping bad and harmful research be this hard? Even when the research provides a beguiling and well-funded vision of possibilities? When even its critics would like to find it valid?

How Does This Happen?

In 1993, Drummond Rennie and I wrote that the modern era of what we now call scientific misconduct dates to 1974. (2) By 1993, a fair amount was known about how to build institutional structures to promote research integrity. I wrote a summary that year in Academic Medicine, a portion of which reads: “institutions must establish a misconduct review process that can render objective, fact-based decisions untainted by personal bias and conflicts of interests. In developing such a process, leaders must be aware of probable pitfalls, establish an accessible structure, and provide for consistent assessment of allegations and complaints, focusing on facts, not personalities.” (3)

As this situation shows, almost two decades on, even a research powerhouse like Duke can struggle with these fundamentals. Responding to allegations of misconduct by members of one’s own institution is hard. (It’s harder to go in and investigate effectively as an outsider, though both can and must be done at times.) There are far more ways to go wrong than you might imagine, especially when experience shows that most concerns expressed turn out not to have any factual basis, but to be rooted in personality conflicts, misunderstandings, and other unhappiness. In a career as a university administrator—in research administration and in a provost’s office handling problems, conducting internal investigations, developing policy, and ultimately focusing on training for preventing problems—I made many mistakes and I have observed other well-meaning, earnest, and honorable people make mistakes. My starting assumption is that the actions of Duke’s administrators were just as well-meaning and well-intended. At the same time, we have by now accumulated a considerable body of knowledge about the cognitive errors and procedural shortcomings that can infect these situations, and it is time for us as a community to begin to heed and apply those lessons.

The failings in this matter are not unique to Duke. They are not new. They map closely to other failings that have been seen repeatedly over many years at high-powered, well-funded, sophisticated research institutions. Whether the result of the cognitive biases to which we are all susceptible (confirmation bias, egocentrism, gain-loss aversion, self-deception, etc.), the incentives and pressures of today’s biomedical research system, (4) the exceedingly short half-life of institutional memory and experience, or motivated blindness, (5) the consequences are severe and impose great costs upon many.

The integrity of institutions is rooted in individual actions that must be supported and reinforced. It takes constant effort to encourage people to do the right thing for the right reasons in the face of temptations, distractions, and money. One mechanism for achieving that is to focus on inculcating an integrity mindset that finds ways to prompt and remind those faced with fighting fires of the larger stakes. Immediacy and promise can, and often do, subvert long-term institutional best interests. Exploring how this pattern repeats itself can help us understand how this could have happened. Let’s start with three glaring examples illustrated here that recur in practically every botched research misconduct investigation: fundamental mischaracterizations of matters in front of one’s face, excessive deference, and conflicts of interest.

1. Mischaracterizing the Situation

There are two key junctures here where the consequences of mischaracterization are particularly painful. First, Duke officials have emphasized throughout this sad affair that top-notch clinical treatment was provided the trial patients. This misses the point. An emphasis on treatment minimizes and diverts attention from the profound breach of responsible conduct of the research that is the raison d’être of a research institution. Second, they seem to have categorized the 2008 letter from Perez along the lines of differences of opinion among researchers posed by a junior member of team, or, that the student doesn’t get it, and needs to be referred back to supervisory chain, instead of as one raising important red flags about the integrity of research.

Let’s look at the effects of each of these mischaracterizations.

A. Mischaracterizing the Situation: Clinical Treatment vs. Research Integrity

In the “Deception at Duke” 60 Minutes report in 2012, CBS quotes Duke’s assurances that no one was really harmed, because all patients received the standard of care in chemotherapy. Yet patient treatment is not the primary goal of a clinical trial in which patients provide their informed consent for participating in research that is testing experimental interventions.

In the words of the survivors of some of the patients now suing Duke, the patients were seeking a chance at the “silver bullet” for survival, even with stage four cancer. (6) They were not asking “is this the best available treatment?” To be provided that was a given. They were asking “does this research give me—or someone else—a better chance than current treatments?” In their dire straits, they wanted the smartest doctors using the newest techniques based on the latest research.

The Duke clinical trials were using genomic predictors that were based on research at the Potti lab. In effect, the test was being tested. The patients were there because of the research. In consequence, the research misconduct is the key to what went wrong.

If you ask the wrong question, you are likely to get the wrong answer. The questions Duke’s officials should have been asking were about their obligations to patients making themselves subjects for Duke’s research, their obligations to the integrity of Duke’s research mission, and their obligations to keeping the faith with their patients.

A Distressing Consequence: Duke’s Legal Strategy

Focusing on the standard of care treatments the patients received, Duke is taking an aggressive legal posture in lawsuits filed around the terminated clinical trials. While their filings are not specific administrators speaking directly, they are made on behalf of the institution and in Duke’s name.

In essence, Duke’s pending motions to dismiss some of the claims against it argue that the patients would have died anyway, given their advanced cancers. This is at odds with the hopes provided by Dr. Potti and by Duke’s advertisement with Anil Potti in it which starts: “Duke has made a commitment to fight this war against cancer at a much higher level.”

Here are some direct quotes from Duke’s legal filings: (7)

- patients received “standard-of-care therapy”;

- patients knew they were participating in a trial that might not have any benefit for them;

- Duke did not “abuse, breach or take advantage of [patients’] trust;”

- Duke did not “place Duke’s interests ahead of” those of the plaintiffs,

- Duke’s and patients’ “interests were never in conflict”

- Duke was “open, fair and honest” regarding “prognosis and treatment options;”

- “plaintiffs cannot show that they were injured by any act or omission by Duke.”

In sum, Duke’s legal position is that it cannot be shown that “a different course of action would have made any difference” in chances of survival.

Is that really the point?

Dr. Potti’s separate legal team (he left Duke after the revelation about his CV falsification) takes a similar stance, that no different course of medical treatment would have affected the outcome. (That is, the same refrain that the patients would have died anyway.) Further, his team asserts that “Plaintiffs cannot establish that Dr. Potti committed professional negligence or that he benefited in any way from enrolling [name] in the trial.”

Put colloquially, “no harm, no foul”?

My litigator friends assure me that taking an aggressive legal posture to pare down charges and keep the most emotionally-laden aspects of the situation away from a jury is the legal equivalent of standard of care. If Duke’s administrators are taking advice from their legal counsel, and their legal counsel is following the guidance of their external lawyers, one can see how they came to sign off on this defense: Duke does a lot of good for a lot of people and there’s a lot of money at stake—and perhaps they already significant offers on the table in negotiations with the plaintiffs. Maybe they see this approach as protecting the institution of which they are stewards. But is it wise? Can it possibly be good for Duke? Can it be good for Duke patients?

The mismatch between scientific values and the rules of the road in the legal system, which are all too often shown in cases involving scientific misconduct, is on full display here.

How will this strategy affect those who rely on public trust to enroll patients in clinical trials? Is this approach healthy for the integrity of the medical research enterprise?

B. Mischaracterizing the Student Letter

The Perez letter is a model of professional restraint and clarity. It references items that could have been checked without relying on his personality or credibility. Even so, Duke administrators sent Perez back to Nevins, a vitally interested party, without any apparent independent assessment of the information in his letter. Without knowing more, this action seems to violate several common-sense, foundational principles of institutional checks and balances.

By now, in our post-Watergate society, we should all understand that even the appearance of a cover-up is enough to provoke interest. While the lessons of painful experience do not come intuitively to investigators whose work is challenged, institutional officials should understand—and be able to convey persuasively—the reality that the moment at which credible questions surface about the validity of work is the precise time to seek an outside perspective, not to circle the wagons.

An integrity mindset recognizes that if the concerns are valid, internally-commissioned review permits questions to be addressed at the earliest possible moment, and corrected relatively quietly. If the concerns are unfounded, these actions create a record that can protect the researcher and the institution if the claims are perpetuated or disseminated.

Referring a vulnerable research student back to those on the ladder above him is not appropriate when there are very significant power differences between the parties; when the concerns, if true, have serious consequences, and when the vulnerable party has already made attempts to discuss the matter with those most directly affected. (8) All three of those conditions were met in the questions raised by Perez, the more so since his correspondence makes it manifestly clear that he tried to raise his concerns directly with Potti and had been rebuffed.

The question in this situation shouldn’t have been “why doesn’t this med student get it?” The question should have been “what if he is right” or, perhaps, “how do we make sure he is not right?”

The harm from getting the most central questions wrong about Duke’s obligations to themselves, their patients, and their students was compounded by classic elements present in other institutional failures to sustain research integrity, excessive deference to powerful researchers, and conflicts of interest.

2. Too Much Deference

Duke administrators told the IOM panel that there was perhaps too much deference to “esteemed”(p. 122) and trusted faculty members.

This is not new in trials overseen by the National Cancer Institute. It is not new in cases of research misconduct. It is especially prevalent in cases not revealed until long after serious damage has been done because institutional officials are reticent about ruffling the feathers of prominent scholars.

As early as 1994, Samuel Broder, NCI director at the time, testified before Congress about the institute’s failure to act on deviations from the approved enrollment protocol in breast cancer trials. These deviations were ultimately revealed through newspaper reporting, not through their own staff, who had been raising concerns for years. Broder diagnosed the cause as follows: “I believe it is, in part, a function of [the PI’s] formidable reputation…a pioneering figure who obviously knew a great deal. I believe there was an excessive level of collegiality and a higher level of tolerance than is now the case.”

One can argue about the details of what happened in that specific case—the controversy at the National Surgical Adjuvant Breast and Bowel Project. However, the behavior Broder describes here—deferring to luminaries—is far from unique to this case. We see it again and again.

Even though multiple institutions have learned this lesson the hard way, we seem to have a difficult time retaining and acting on it, especially when there’s potentially a lot of money at stake. The cure is as straightforward as it is difficult: when problems arise, the focus must be shifted to the big picture, and the right questions posed to the right people with tact, finesse, and backbone.

3. Not Recognizing or Guarding Against Pervasive Conflicts of Interest

The 2012 IOM report concludes that “there is evidence that some of those involved in the design, conduct, analysis, and reporting of the three clinical trials and related trials involving the gene-expression-based tests had either financial or intellectual conflicts of interest that were not disclosed….according to [one Duke official], there was a great deal of confusion within the university at this time about when a patent and an intellectual property interest qualified as a conflict.” (p. 255-56)

Really?

By 2008, there were scores of articles in biomedicine demonstrating the profound influence of money (and potential money) on researchers, on institutions, on physicians, on results, not to mention reports, meetings about conflicts of interests, conferences, white papers, and policy guidelines. My own first publications about conflicts of interest were written in 1989, and I wasn’t a trailblazer in the field. (9)

Interestingly, in 2001-02, the Association of American Medical Colleges and the Association of American Universities issued two reports on conflicts of interests in human subject research—one on individual and one on institutional conflicts. A Duke School of Medicine administrator was a member of the task force that developed them. The policies and guidance were updated in February 2008. Duke was also represented on that panel.

Duke had filed patents on the Nevins/Potti research, filmed a commercial for the institution featuring Dr. Potti, and held shares in the company founded to commercialize the work. The prospects were not just of financial return, but of significant wealth and renown. This was true for individuals throughout the university, and it was true for the institution itself. Stewards of a great institution are responsible for recognizing and guarding against conflicts of interest that might impair evenhanded assessments of facts, even those brought forward by a junior member of a research team or outsiders. There are policies, guidelines, and recommendations galore. What will it take to implement them more consistently and effectively?

Along with many other cases of mishandled research misconduct, this unfolding story illuminates the effect of systemic incentives and pressures in the world of big money research that can entice even the well-meaning away from an integrity mindset to one that, in the short-term, defers to power and fails to ask the right questions. Because humans are fallible, it behooves the institutions in which they work to build checks and balances, and structures that can take a longer view and ask the right questions at the right times.

Final Thoughts

Academic research operates on trust. It is critical to be able to trust what’s in the published literature. As the Nobel Laureate Joshua Lederberg put it, “above all, a publication is inscription under oath, a testimony.” (10) The authors on the byline are directly responsible to the readers. Potti had a great number of co-authors. How is it that none of them saw the problems that were obvious to Baggerly, Coombes, and Perez?

Ironically, because the Institute of Medicine review committee was informed that Duke had contacted all 162 collaborators who were co-authors on all 40 papers by Anil Potti, and “in no instance did anyone make any inquiries or call for retractions until contacted by Duke,” the IOM committee concluded that “This experience suggests the need for co-authors to have more shared responsibility for the integrity of the published research.” (p. 251)

Too bad they didn’t hear about the one person who saw such significant problems with the work that he asked for his name to be withdrawn: the medical student who took his authorship obligations so seriously that he set his own career back in order to honor them.

The author is director of the National Center for Professional and Research Ethics www.nationalethicscenter.org, research professor, Coordinated Science Laboratory, professor emerita, College of Business at the University of Illinois at Urbana-Champaign. She runs a consulting company and is the author of The Young Professional’s Survival Guide(Harvard University Press, 2012) and The College Administrator’s Survival Guide (Harvard University Press, 2006).

Acknowledgements: Drummond Rennie reviewed and provided content suggestions and revisions for multiple drafts resulting in significant improvements. Brian Martinson raised an important question for consideration. Anna Shea Gunsalus assisted with research and editing.

Endnotes:

- Unless otherwise noted, information is from documents and reporting published in The Cancer Letter.

- Gunsalus, C. K. and Drummond Rennie, “Scientific Misconduct: New Definition, Procedures, and Office—Perhaps a New Leaf.” Journal of the American Medical Association, Vol. 269, No. 7, February 17, 1993.

- Gunsalus, C. K. “Institutional Structure to Ensure Research Integrity.” Academic Medicine, Vol. 68, No. 9, September Supplement 1993.

- Alberts, et. al. PNAS paper: http://www.pnas.org/content/111/16/5773.abstract

- Max Bazerman, The Power of Noticing: What the Best Leaders See. Simon and Schuster 2014. New York.

- This and succeeding quotes from Motions for a Summary Judgment, filed in Aiken et al. vs. Duke University et al., filed in Durham County, NC, Superior Court, posted by The Cancer Letter, Jan. 23, 2015 (Walter Jacobs testimony in depositions, quoted in plaintiff memo in summary judgment motions. 12.12.14, page 10.

- All quotes from Motions for a Summary Judgment, filed in Aiken et al. vs. Duke University et al., filed in Durham County, N.C., Superior Court, posted by The Cancer Letter, Jan. 23, 2015.

- SEE Gunsalus papers: How to Prevent the Need for Whistleblowing: Practical Advice for University Administrators; How to Blow the Whistle and Still Have a Career Afterwards

- Gunsalus, C. K. and Rowan, Judith. “Conflict of Interest in the University Setting: I Know It When I See It.” Journal of the National Council of University Research Administrators, Fall 1989.

- Lederberg, J. (1991) Communication as the Root of Scientific Progress (presentation at 1991 Woods Hole Conference of International Scientific Editors). Stefik, M. eds. (1993) Internet Dreams: Archetypes, Myths and Metaphors, 41 MIT Press Cambridge.